If you're in your 30's like me and as a child, you got a Commodore, MSX or one of those other computers in that generation, you might remember

playing text-based adventure games. I was hooked on them at age 9 actually and they taught me English (well, and the dictionary did :). Because of those games, I scored 9/10 for all English classes. We never heard of the Internet back then, but there were already English-spoken movies with subtitling on TV then, so that helped as well a bit. However, this post isn't meant to recollect those stories, it's about the design of the interpreters in those times. Just recently, I'm very interested in different designs and approaches to parsing, because it's playing a central role to Natural Language Processing and in that sense to Artificial Intelligent programs that can deal with input in natural language. The Zork text-adventure games were the start of a range of games in the genre. And one of its important necessary features is to accept input from human beings giving orders, process it and then reply back with the results to that command.

Zork actually had a very interesting design of handling this. The story itself was loaded into a

z-machine, which executed based on user input. If you want to experiment with such interpreters, have a look at

frotz. There's actually a very low-key community in the world who's still

playing adventure-games or, as they call it now,

reading interactive fiction. They publish their work here and there.

Here's an archive for reference.

Initially, you may think that writing a work of interactive fiction or text adventure is a lot of coding work, but there's actually a very cool application that you can use to produce them, called

inform. And there is no real coding involved, everything is typed in natural language. Since at one time, a computer needs to

run your story, the only not so natural thing is that you declare properties and attributes about things that you personally already take for granted. Because to a computer it is not obvious that a bed can support a person and you can enter it, that needs to be declared explicitly in the story (the story eventually becomes the program). Using inform, a lot more people can write stories and adventures.

At this point, it's probably better to show an example:

The troll can be conscious or unconscious.

Check attacking the unconscious troll with something:

say "The unconscious troll cannot defend himself: He dies.";

say "Almost as soon as the troll breathes his last breath, a cloud

of sinister black fog envelops him, and when the fog lifts, the

carcass has disappeared.";

remove the troll from play instead.

So notice how the troll is given attributes and properties and how some sort of reasoning process is inserted into this process just by applying natural language. The sequence of words (the phrase) used to manipulate the behaviour of the story (the program) is fixed. The descriptions, what you put between the double quotes, is just a sequence of characters and remains unintelligible to the computer. The story is then first compiled into a slightly lower-level language, which compilers towards z-code can interpret:

[ TakeDrink;

canteen--;

if (canteen lt 0) {

thirst=0; UpdateThirst();

} else {

thirst=4;

"Better watch for an oasis !";

}

];

This

lower-level language is the language in which the older text adventures were coded. They used a lot of variables (often global) and contained very simple code that influenced game play. The limit was that of the programmer basically. It probably feels a bit like scripting.

For inform, by declaring items as a kind (a category of what it is, e.g: The spoon is a thing), they instantly get a couple of capabilities and the program knows what can be and cannot be done to them, because the inform compiler combines it with a default rule set (which may be overridden in the text). The (intended) interaction of the player with its environment is always communicated by verbs and nouns. In the case of inform, the file already has a large number of default rules, actions and behaviours for all these verbs and possible interactions. Per verb, the program maintains a number of rules with regards to impossible actions, or perhaps temporarily impossible actions due to some state in the interpreter (maybe the player should eat spinach first before they can open the door?). For further reading, the architecture of inform is

listed here.

The

interpreter for these programs executes so called

opcodes, just like Java basically, so it is a kind of virtual machine. An interpreter is like an implementation of a virtual processor and memory on your computer that has the ability to do things at a slightly higher level of execution and protection (this is another way of saying that you're grouping cpu opcodes together in a useful way). In contrast to a function or method in a programming language, the interpreter makes no assumptions on the context in which it is used, it's only a dumb operational thing. The function or method for general programming languages exist within a very specific context of application.

Because Zork and the z-machine were initially developed for computers with very limited resources (64K? 128K?), there is a lot of optimization being done to cram the strings together (5-bit) and there are limits set on the memory addresses of the interpreter. Nowadays though, those limits can be relaxed heavily and possibly this could allow some very nice programs to be created using these virtual interpreters.

Read on here for more information on these z-machines and their relevance to AI.

The text in the link above mentions Prolog. I've come to both respect and hate prolog. Prolog is also an interpreter, but works with a very general-purpose language and is very much related to programming in the general sense. Prolog always attempts to prove that your statements unify to something (become true) and does all in its efforts to make it so. It is strongly related to first-order predicate logic and research that entails it.

Now... you may know that when the program behaviour needs to be extended or modified, you generally need to stop the program, change the sources, recompile and then kill the running application and restart with the modified version. In that sense, a program on a computer is static. It was never considered in the architecture of hardware and software that programs needed to change at runtime. This is because they were executing tasks that were entirely thought out before the implementation. Then the implementation takes place, you run it and it can take care of business for a while.

An interpreter is an ideal environment for running experiments where the program can change at runtime and must be able to modify its behaviour and view on the world. If this interpreter can connect to programs online, the natural language can also be used as a means to communicate ontologies with other programs or humans, much in the same way that the above declarations in adventure games are used to extend the ontology of the adventure game (since that is what it essentially does). The difference is that the ontology is compiled beforehand and from that point onwards becomes static.

The bad thing about AI is that it's feeling the pull of the initial computer years and the way how we think about computers, or actually consider what processing is. To most computer-savvy people, it's the most normal thing to kill applications, recode them, restart them and then observe what it does. I think we should probably regard computers slightly differently to make more progress in AI.

Possibly because of the argument above,

ontologies for the web are also mostly expressed as some sort of static files. The idea is that knowledge is temporarily static and then doesn't change? Also, even though they look meaningful to us (although interspersed with nonsense brackets and dashes and other signs), the computer just sees xyzxyzxyz and absdfoip and it's all nonsense to it, except the way in which it appears and can be recognized later. It's the reasoning over those forms of appearance that it can pretend to be processing them semantically. The gotcha is in the fact that when we look at the files, they look meaningful, but that's because the words give us a short replay of the vision, hearing or feeling related to the terms.

The truth is that the computer has no knowledge at all about the meaning of the terms and just executes some other code when it sees xyzxyzxyz again or when it knows that abcabcabc was in one way related to xyzxyzxyz. If you want to do yourself a favour to understand ontologies properly, recode the entire ontology in such nonsense terms and it becomes clear that it's easy to be misguided about how much computers really know.

Conclusion: The design of the z-machine interpreter is a very interesting concept for further AI research. One should not only consider interpreters to be relevant at runtime, but also integrate interpreters with compiler constructs, such that a phrase of input doesn't necessarily only modify the state of the interpreter, but may also modify the program itself (adding knowledge to the knowledge base). This allows one machine to talk to another using natural language (easier to debug) and it requires only one interface implementation, since humans use the same channel of input. The interpreter should also have the requirement that it can sync its state to disk and start up later with that memory load, such that it can continue executing from where it left off the last time. An adventure game interpreter is coded with a goal in mind and that is executing until somehow it reaches an end state (you could visualize the interpreter as a markov chain and even as a finite state machine), where knowledge is fixed and the transitions of one knowledge element to another or one contextual state to another is determined by rules.

Ongoing question: Now, this gives us one interesting question to ponder over next: for an environment in which knowledge may be modified and received and states relating to that knowledge manipulated (the context), who or what will set the goals for this interpreter to achieve and what do those goals look like? Can the interpreter determine its own goals and negotiate with us or other computers to try to achieve them?

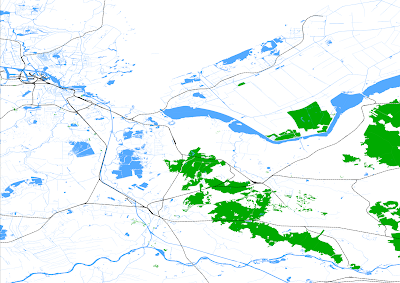

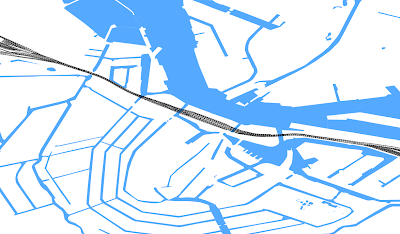

In some previous project, I worked with GIS data to show demographic data on Google Maps. The underlying database is PostGreSQL. This post shows how you can use (part of) the database of OpenStreetMap (verify license!) to extract features from the dataset to paint this onto an SVG or PNG image. Using the PostGIS database in combination with the loaded data, you can extract features (these are like gmaps overlays, or GIS layers) and include them in the image. You want to include railroads and regular roads? Not a problem! You only want to include waterways and the general shape of the country? Can do! The image above was constructed by taking water, waterways, train railroads and forest areas of Holland. Then I zoomed in a bit to show the level of detail.

In some previous project, I worked with GIS data to show demographic data on Google Maps. The underlying database is PostGreSQL. This post shows how you can use (part of) the database of OpenStreetMap (verify license!) to extract features from the dataset to paint this onto an SVG or PNG image. Using the PostGIS database in combination with the loaded data, you can extract features (these are like gmaps overlays, or GIS layers) and include them in the image. You want to include railroads and regular roads? Not a problem! You only want to include waterways and the general shape of the country? Can do! The image above was constructed by taking water, waterways, train railroads and forest areas of Holland. Then I zoomed in a bit to show the level of detail.

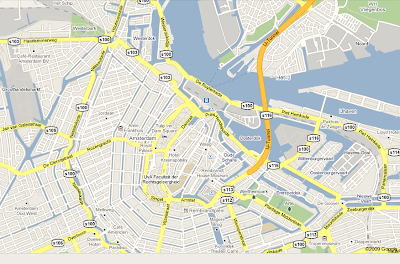

Notice how Google maps have painted areas in a more generalized way, whereas the openstreetmap image is still the original ESRI format. It should not be very difficult to start painting images of GIS data in a format like the Google one above, then overlay the original high-resolution data over those images to indicate the position of cafés, cinema's, etc...

Notice how Google maps have painted areas in a more generalized way, whereas the openstreetmap image is still the original ESRI format. It should not be very difficult to start painting images of GIS data in a format like the Google one above, then overlay the original high-resolution data over those images to indicate the position of cafés, cinema's, etc...