The CSP solver talked about before is an effective way to calculate all possible solutions. Some problems however are either underconstrained or just have a very large number of possible solutions. In those cases, exploring the entire solution space may be very expensive; or certain application areas may be subjective to particular time windows in which the solution must have been developed (dynamic scheduling problems). Even in dynamic scheduling, there are differences with regards to the exact time window that is allowed. A scheduling system for shipping berths for example has a different allowed time window than a scheduling system for elevators.

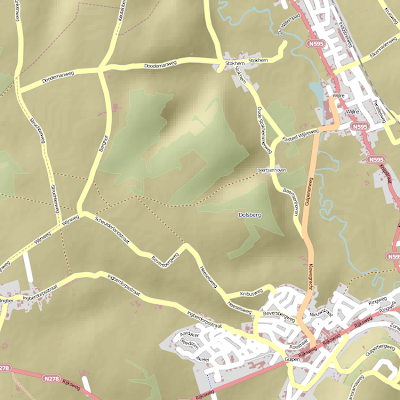

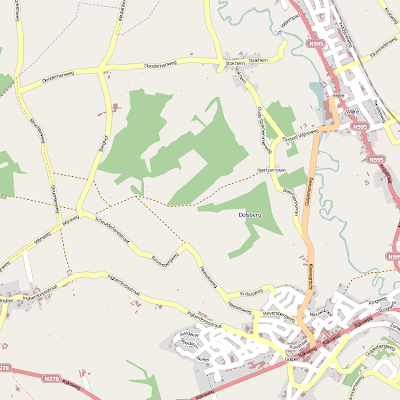

The CSP solver talked about before is an effective way to calculate all possible solutions. Some problems however are either underconstrained or just have a very large number of possible solutions. In those cases, exploring the entire solution space may be very expensive; or certain application areas may be subjective to particular time windows in which the solution must have been developed (dynamic scheduling problems). Even in dynamic scheduling, there are differences with regards to the exact time window that is allowed. A scheduling system for shipping berths for example has a different allowed time window than a scheduling system for elevators.The image shown here is a 'fitness' landscape. It's a bit of an imaginary landscape that displays how effective or costly a solution is. The peaks are desirable solutions, whereas the valleys are much less desirable. In this case, it's a one-dimensional graph, but for other solution spaces it could well be more of a 2D or even 3D space. The number of dimensions for the fitness landscape is actually determined by the number of cost functions you have.

This is where we get to the optimization part. A cost function is a function that determines the cost of a range of tuples that are (part of) a solution. This cost function is what guides the desirability of a certain solution and that is what basically drives the optimization. Ideally, one would like to find the best solution first. In reality, the best solution doesn't come first without a bit of back-tracking. Even worse, there is no guarantee in practical planners that the best solution will be found, only a solution that is 'good enough' given a certain threshold.

If you've paid attention, then I've talked about the use of a 'cost function', which actually only calculates the cost of a particular solution so far. So how does one actually form very good solutions? Because this sounds like it's more like trying out each possibility in turn until we're happy with the solution so far? This is why the cost function must be paired with a heuristic function. The cost function is used to calculate the actual cost/benefit. The heuristic function is used to calculate the expected cost or benefit for using the valuation in a particular domain variable over a slightly further horizon.

This is not exactly all. The cost functions do not have to be commensurate or agree with another. Sometimes a particular tuple choice is good for cost function A, but very bad for function B. So the balance between the importance cost functions must be determined prior to starting the solution process. Most cost functions are pretty linear, but it's also possible that continually preferring solutions that favour function A eventually raise the costs non-linearly, such that further in the solution space function B becomes cheaper than function A. Those are very difficult to solve.

There may also be other situations in which you don't want the ideal solution, but want to allow for a certain flexibility overall. For example, in a schedule for berthing ships where you expect changes to occur, you might want to favour a solution where a minor change in the schedule doesn't flip the entire schedule around, but where the change is constrained to one or two flips between tupelizations (because the more tuples are flipped due to the change, the more costly the change will have become).

Optimization is rather difficult to do effectively and it's an NP-complete problem. So either you arrange the computation power to go through all solutions, or find better methods to achieve proper solutions. There are two ways to do the optimization. You could try to do optimization at the same time as solving a CSP (basically, define the ordering of values for a variable when tuples are picked), or generate some schedule that is more or less good and then try to improve it through local search.

Local search basically means that you have a complete schedule, but pick two tuples of a set and flip valuations around (whilst preferably not violating the constraints) in the hopes of finding a schedule that is slightly better. Local search however, if you look at the image, is a bit tricky, because you may have to force the solution to go through a valley. And how can you tell whether the solution, when going up another hill, is actually going to be a solution with a higher peak than the one you had before? And if you're just going to try this out, how do you prevent the exploration of the entire solution space, which is what you wanted to prevent in the first place?

Basically, optimization is a bit like navigating a landscape in fog. You get only slight clues that there's a hill or a valley and at some point you must decide whether to carry on downhill to eventually find another monsterpeak, or stop searching altogether and call it your best solution. That is very difficult to do without a map. Constructing the map is equal to calculating the entire solution space.